If you’ve been watching television coverage of the London 2012 Olympics, you’ve probably seen plenty of impressive visual effects. The underlying technology for generating these effects is quite similar to that used for film and television production, with the caveat that all the effects have to be generated in real-time (or quickly enough to be shown in an instant replay).

The most common example, present in the coverage of almost every Olympic sport, is a motion graphic superimposed on the raw video that labels the lanes that runners or swimmers are in, or highlights what line a competitor has to beat in order to win a race. These types of effects have been around for a while, and are created using feature tracking and well-calibrated cameras that know their relationship to the 3D plane where the graphics should go (e.g., the track or pool surface).

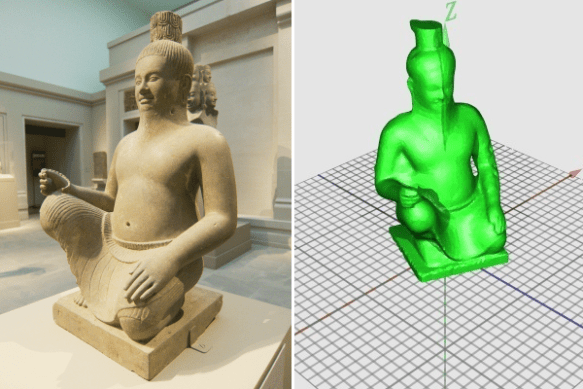

There’s a cool, more advanced effect being deployed in diving and gymnastics coverage in which the camera seems to swivel around the athlete in 3D as they’re frozen in mid-air (a la The Matrix). This is done with a combination of real-time foreground segmentation from multiple cameras, a fast multiview stereo algorithm, and a version of production visual effects software like the Foundry’s Nuke. The underlying approach is clearly explained in this video about the i3DLive system developed by the Foundry, the University of Surrey, and BBC Research and Development. Sorry I couldn’t figure out how to embed it here, but it’s really worth a look.

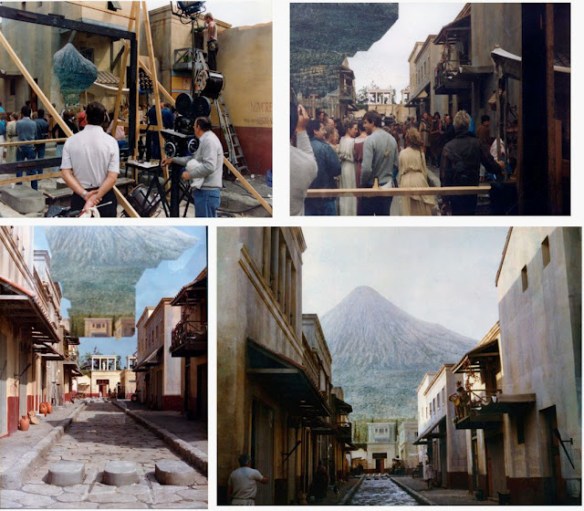

There’s a ton of interesting information on the BBC Research and Development web site. For example, check out these pages on augmented reality athletics, markerless motion capture for biomechanics, and all sorts of “Production Magic” techniques. These are great applications of computer vision for visual effects!

In the US, many of these types of effects (e.g., the virtual first down line in football) are created by a company called Sportvision; many video examples can be seen here.